An access service for a new archival era | The National Archives

15 March 2022

Putting accessibility first early in the alpha stage

14 June 2022Improving road lane detections with data augmentations

During a 4-day hackaton we guided several PhD students to define a problem and explore a few approaches for solving it.

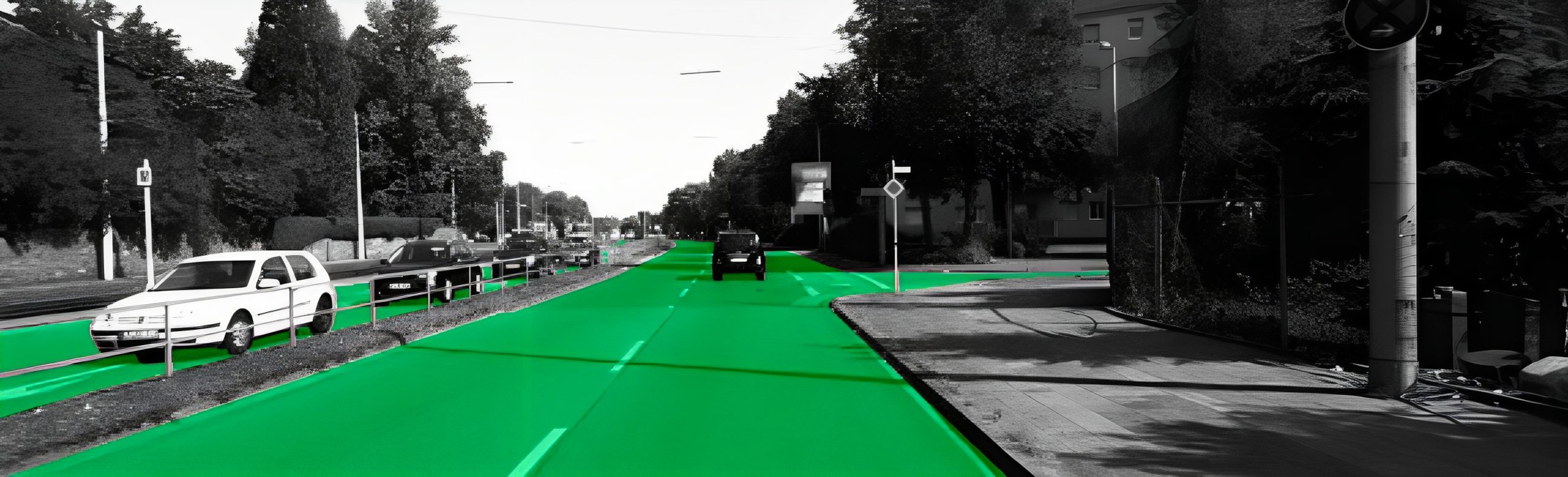

The illustration above shows a state-of-the art segmentation model that correctly isolates road lanes; however, the same model struggles with more challenging data, such as diverse perspectives. This blog posts discuses some techniques to mitigate the issue.

Over the last few years, Viapontica AI has led a wide-ranging project working with UK transport authorities to innovate novel methods for monitoring of the condition of road surfaces. One of the most promising approaches has been to use machine learning and computer vision to detect road defects from cameras mounted on public vehicles.

Since a lot of our work involves transferring academic research to industry applications, we collaborate closely with a few universities in the UK and like to involve students in projects soon before they graduate. Hackathons are a good way for students to get their feet wet and get a sense of our company culture and the type of projects we do, and we get a chance to meet candidates in a more relaxed way than a traditional job interview.

Here, we present the story of a 4-day hackaton during which one member of our team, Vera Dimovska, guided several PhD students to define a problem and explore a few approaches for solving it.

We kicked off the 2022 ICMS MAC-MIGS Modelling Camp hackathon early on a Monday morning, by presenting a few different challenges, which relate to different strands of our current work.

Students formed groups around their chosen challenge and we had a group of 8 ambitious PhD students. Advised by an academic supervisor from The University of Edinburgh's School of Mathemtiacs, the group took a stab at the problem of correctly detecting road lanes from RGB images.

Over the course of 4 days in May 2022, we collaborated with academic staff and PhD students from different UK universities as part of the 2022 ICMS MAC-MIGS Modelling Camp.

The Challenge

Most open-source road detection models, trained on U.S. data, tend to not generalise well to niche use cases and peculiarities of UK roads

On the machine learning front, we often face challenges with the distribution of the publicly available datasets. Most of the applications and AI models which we build are for either UK or European clients, while most of publicly available training data is often sourced either from the US, or from a basket of countries, of which UK and European countries forms a very small sample. This results in a few issues, for example, because:

- Road markings and surfaces differ between countries

- We may observe cobblestone and concrete streets, which are not present in the training data

- Angle of the camera used for recording varies

Baseline

Students in the hackaton decuded to look at Spatial CNN for Traffic Scene Understanding trained on the CULane Dataset which uses a DeepLab Network with VGG16 Backbone. Participants decided to use the model as a baseline. It is considered state-of-the-art reporting 96.53% accuracy on its test data. The sample below shows reliable detection of lanes.

Source: Pan, Xingang, et al. "Spatial as deep: Spatial cnn for traffic scene understanding." Proceedings of the AAAI Conference on Artificial Intelligence. Vol. 32. No. 1. 2018.

However, wehen we run the same model on some images from our own data collected in Edinburgh in Scotland, and in Durham in northeast England, we get unsatisfactory results, as it can be seen below.

Part of the issue, it can be hypothesized, is that camera inclinations vary a lot in our data, and we often encounter adverse weather conditions which change the visual appearance of the traffic scence. Let's focus on those two issues, which we suspect confuse the model, and try to imrpove the performance.

As students prepare to take a stab at the challenge, we help them define the problem as, “Improving state-of-the-art road/lane detection models given different camera inclinations or adverse weather conditions". We will try to improve the ML model with two strategies:

- Data Augmentation – Utilising data augmentation techniques that will synthetically recreate more realistic conditions: changing the camera inclination, accounting for motion blur, accounting for adverse weather conditions

- Adding more training data – Adding more data is the most obvious approach, even if the most resource intensive; We sought ways to be be creative to add data which is actually easy to label.

In our practice, we often need to tackle the issue of insufficient model generalisation. The schematic illustration below shows some useful techniques which allow fine-tuning of publicly available models to a particular use case.

Data Augmentations

Let's implement a few data augmentations.

Simulating blur

To mimic real life obstructions, such as out-of-focus blur, or motion blur, we can use two simple but effective blurs: gaussian blur and direction moving blur. Two sample outputs are shown below.

Simulating adverse weather conditions

Next, to mimic weather conditions, we superimpose fog and rain on the original RGB images. It may not look completely realistic to a human eye, but we hope it will be good enough for the AI model.

Simulating different road surfaces

Similarly, we superimpose cobblestone road texture on the images. Again, we do this with low fidelity. It's quick and dirty first step to evaluate the strategy. If it works, we can always come back and make improvements.

Varying camera angles

To mimic different camera inclinations, we transform the perspective with, by using the labelled lanes from the CULane dataset and we transformed the image perspective with OpenCV.

Adding more data

As we can see from the previous samples, the CULane dataset does not have the best camera resolutions. Diffeence in resolutions can also cause problems. We decide to get some high resolution 4K video, run inference on the HD dat, get some high confidence labels and (after some manual review) use those as ground truth data for further augmentations and training.

We guide to students to check out YouTube for videos with camera inclination similar to that of the CULane dataset and clear view of the road surface.

Closing Thoughts

As a company, we have spent more than 2 years researching the problem above, so we didn't expect students to tackle it in 4 days. But what is common between a short and a long project is the requirement to be able to quickly distill the essence of a problem and deliver quickly reliable directional answers.

At the onset, a project often needs to be broken down into smaller tractable problems, so we evaluated students on the basis of their ability to pull up their sleeves and generate realistic hypotheses which can be tested with the available constraints - short time frame, few GPUs, little training data. Depending on what worked, or didn't, we expected the best teams to fail quickly, learn from their mistakes, and ultimately make an evidence-backed recommendation if a technical approach is feasible or whether it is dead in the water.

Our clients may be SMEs, corporate or government clients who are running a pilot, building their next flagship product, or building a quick prototype. Either way, early in the innovation process, complex organizations need a clear go/no-go recommendation whether a particular idea will fly, or whether the delivery team needs to pivot to avoid waste of resources. The hackaton attempted to simulate that environment to give the students a realistic flavor of the challenges we tackle day-to-day on some of our more research heavy projects.

The examples in this post use data from the public domain to observe confidentiality obligations with client data.